Last updated on March 1, 2013

Warning: The following text may contain personal attacks and wild speculations about certain people. This will not make any future attempts of dialogue with them any easier, but in my humble opinion they have had their chances.

On 25 June, 2011, Janette Sherman and Joe Mangano (from now I will refer to them as S&M) had a new article in San Francisco Bay View. We just noticed it, almost a month after it was written. It has the title: Question marks, the elephant in the room and the refusal of nuclear power defenders to consider what has happened to people and the environment since Fukushima and Chernobyl. After browsing it I scratch my eyes, think for a few minutes (ok, I try to think, head hurts so much…), and then I read it again. Let’s take a closer look at what they write.

First a short introduction to set the stage:

By concentrating only on the CDC (Centers for Disease Control) data – incomplete at best – and ignoring the on-going radioactive releases from Fukushima, it is apparent that the pro-nuclear forces are alive and active.

Hey, wait a minute. It was not the pro-nuclear forces (whatever that is, but let’s embrace the term with a jolly “Fooorward!”) who started tampering with the CDC data in a way that would flunk any undergraduate student in Statistics 101, it was you, remember? This does not mean that we ignore the rest of the issue, but we do take offence when anti-nuclear forces fail to use the information from Fukushima to their advantage and have to cook up alarmistic results in order to make the situation look worse than it is. This is indeed very remarkable, aren’t the actual events in Fukushima bad enough for you?!?

If I had the mindset of S&M, I would write

By mis-treating the CDC data – incomplete at best – and ignoring all knowledge about radiation effects, and actual radiation levels in the US due to Fukushima, it is apparent that the anti-nuclear scaremongers are alive and active.

This is clearly not a way forward, at least not if you hope for a dialogue and an improvement of the nuclear debate. Oh well, let’s move on.

The second section explains that the titles of the previous articles (there are two versions, one in CounterPunch, and one in San Francisco Bay View) includes question marks in order to

stimulate interest and prompt demand for governments – Japan and the U. S. at least – to provide definitive and timely data about the levels of radioactivity in food, air and water.

Hm, Janette and Joe. May I kindly ask: Wouldn’t it be better to try to stimulate this interest in a way that does not include cherry-picking, unfounded alarmism that scares the heck out of millions of parents to infants, and a way of throwing random pieces of data around that should reduce whatever credibility you might have had before to a new low point?

Next section:

We received many responses, some in support of our concerns and some critical about how we used CDC data, including outright ad hominid attacks accusing us of scaremongering and deliberate fraud.

Oh really? I guess that I personally have to plead guilty to this charge, but they link to the blog entry by Michael Moyer in Scientific American as an example of an ad hominid attack. I re-read Moyers scrutiny of the CounterPunch article, but fail to see any personal attacks there. Ok, he uses words like “scaremongering”, “froth up”, “data fixing”, “critically flawed – if not deliberate mistruths”. Still, Moyer attacks S&M’s actions, not their personal traits.

Or do S&M really mean ad hominid attacks? My first assumption was that they mixed up “ad hominem” and “ad hominid“, but maybe they do know the difference? I bring the rest of us up to date by quoting the text on this link: “The former [ad hominem] is a criticism of a particular person; the latter [ad hominid] is a commentary upon a species.” So, have Moyer, myself, or anybody else involved in this issue, referred to S&M as neanderthals, platypus, or similar? Not that I can see, but it could be an interesting path to digress upon. Anyhow: Sensitive bunch, those scaremongers…

Now it becomes interesting:

Given the fallibility of humankind, we may have erred, and if so, will admit it. Given the delay in collecting data and the incompleteness of the collection, the criticism may be valid. MMWR (CDC’s Morbidity and Mortality Weekly Report) death reports have certain limits – representing only 30 percent of all U.S. deaths. They list deaths by place of occurrence, while final statistics are place of residence and deaths by the week the report is filed to the local health department, rather than date of death. Finally, some cities do not submit reports for all weeks. The CDC data are available at http://www.cdc.gov/mmwr/mmwr_wk/wk_cvol.html.

So, they admit that they may have erred, or do they say that they may admit it if proven to be the case? Or…? I am not sure, but this is probably as close to admitting anything that they will ever be. It is of course not their fault, it is the limitations of the CDC data that we should put the blame on, not a second thought about their method.

My first interpretation from reading the section is that they admit to that the statement in the first articles about a statistically significant 35% increase in infant deaths may not be correct (no matter who to blame). Unfortunately, it turns out that I am severely mistaken in my interpretation:

Since the article was originally published, we have had the chance to further analyze the CDC data. Historically, the change in infant deaths for the previous six years in eight Pacific Northwest cities from weeks 8-11 (pre-Fukushima) to weeks 12-21 (post-Fukushima) is about 6 percent – never above 11 percent. But in 2011, the change was 35 percent, far above anything ever experienced.

The same eight cities, the same comparison – four weeks 8-11 vs. 10 weeks 12-21 infant deaths:

- 2005 +4.1 percent

- 2006 +10.0 percent

- 2007 +5.1 percent

- 2008 +5.5 percent

- 2009 +2.8 percent

- 2010 +10.9 percent

The average for 2005-2010 is + 6.1 percent for a total of 1,249 infant deaths.

- 2011 +35.1 percent (162 infant deaths)

Before 2005, there were missing data. But the years 2005 to 2010 are about 98 percent complete.

Argh! Now I really do want to turn ad hominid on these people, whatever species (sparsis timoris?) they may belong to! First they say that maybe they were wrong and if so they maybe will admit it. Then they go on and make more “analysis” from the CDC data base, using the same lousy way of handling the data!

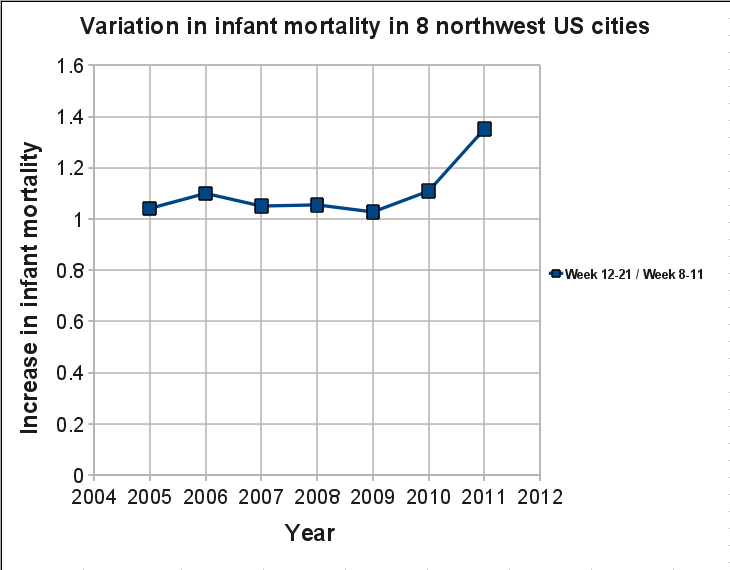

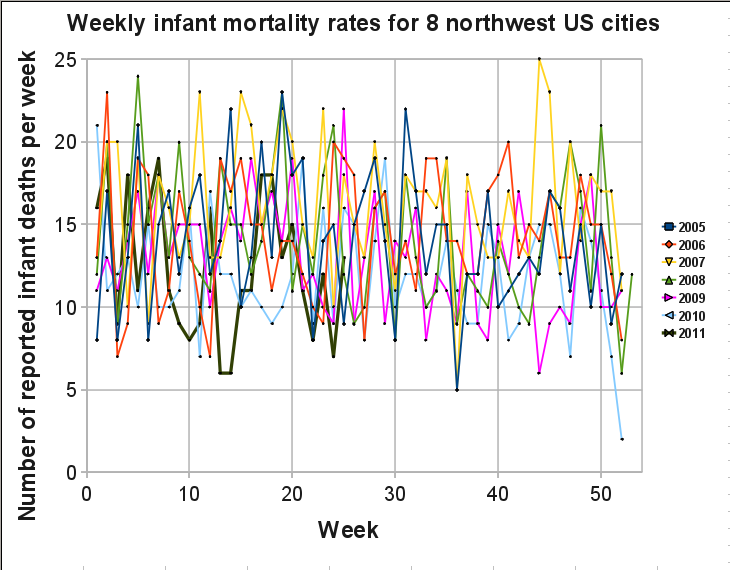

There has been plenty of text here, so let’s lighten up with a few plots, showing the CDC data S&M are mistreating. The plots from my previous posts about S&M should be enough for saying that this is rubbish, but one more round with the CDC data base will not hurt, in case that somebody still believes in S&M’s fables. We start with plotting the change in infant deaths between weeks 8-11 and weeks 12-21 for the years 2005-2011, i.e. the ones that S&M now claim to have done a more careful analysis on.

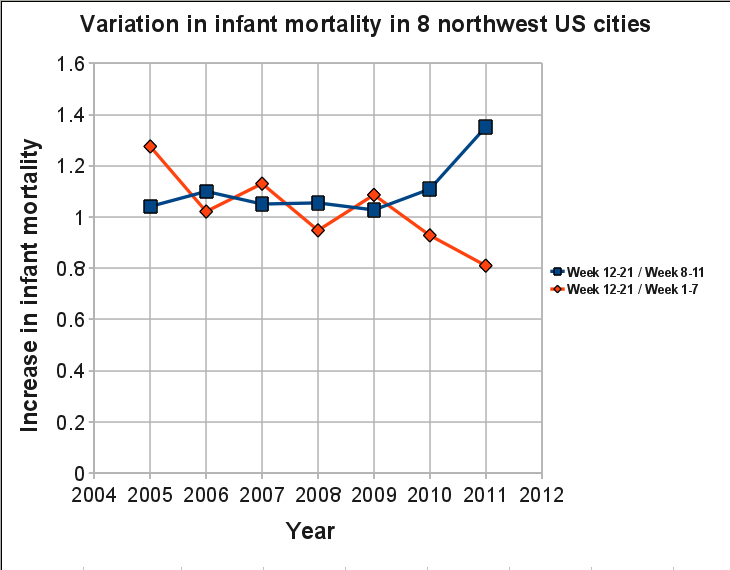

The blue squares show the data as given by S&M. Nothing wrong in the data, this is what you get when you do the same treatment as S&M, weeks 12-21 give a higher weekly infant mortality rate than weeks 8-11, with a few percent every year. Except for 2011 where the 35% increase looks really alarming. But we know from before that they have cherry picked the weeks by only using four weeks before Fukushima and 10 weeks after. If we also plot the ratio of weeks 12-21 over weeks 1-7, shown as red diamonds, we get the following trend:

Quite interesting that there is a decreasing trend, and that the decrease is at its lowest level this year, a 20% reduction while it before usually was a slight increase (the 30% for 2005 is almost as much as the 35% that S&M have made so much noise about). In other words, if we compare the weeks after Fukushima with weeks 1-7, there seems to be a very beneficial effect on child mortality. Could it be that hot particles are beneficial for infants? This is rubbish, of course, but so is the question asked by S&M.

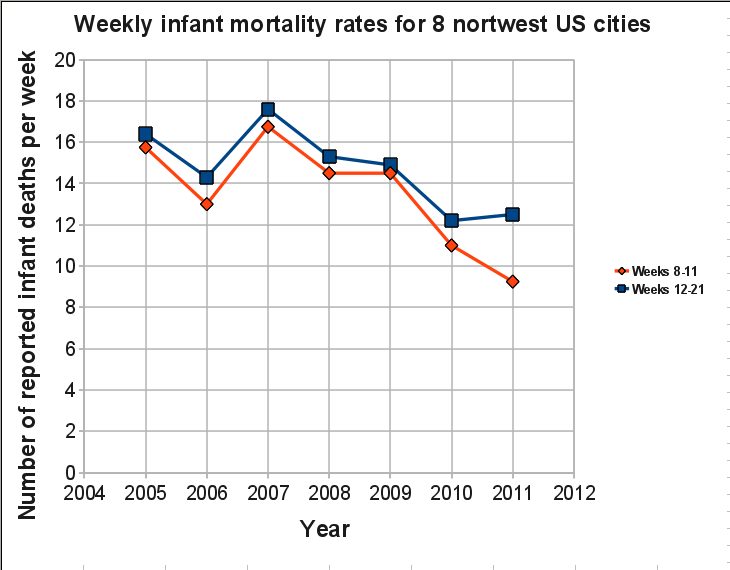

Still, why the drastic increase this year if they only used four weeks for the years 2005-2010 as well? And why does the results look completely opposite if we instead compare with weeks 1-7 instead of weeks 8-11. The answer is: statistical variations. We are watching random noise, not real trends due to a single cause that can be easily deduced. For this we need to look at longer time spans (for a start, we probably need to do a lot more as well, but let us not confuse the S&M-fans by introducing too much real scientific reasoning). But in order to understand the discepancy, we have to remember that they present the ratio between the two time periods, not the actual numbers. In the original articles S&M talks about increased infant mortality (you may remember that the articles start with “U.S. babies are dying at an increased rate.” Let’s take a look at this.

Now we see something interesting. Not only are the number of infant deaths for weeks 8-11 at an all time low during 2011, the number of infant deaths for weeks 12-21 are also at a very low level! U.S. babies are dying at an increased rate? I think not! S&M are shamelessly (if they really believe in their own conclusions then they are indeed very incompetent) showing results for relative numbers, not absolute numbers. Perfectly ok if you are honest with how you handle the data, can refrain from cherry picking the weeks and the areas, and do not suffer from an alarmistic version of Tourette’s syndrome. But S&M prefers to show a relative increase of 35% for this year, while the absolute numbers show a decrease when comparing with earlier years.

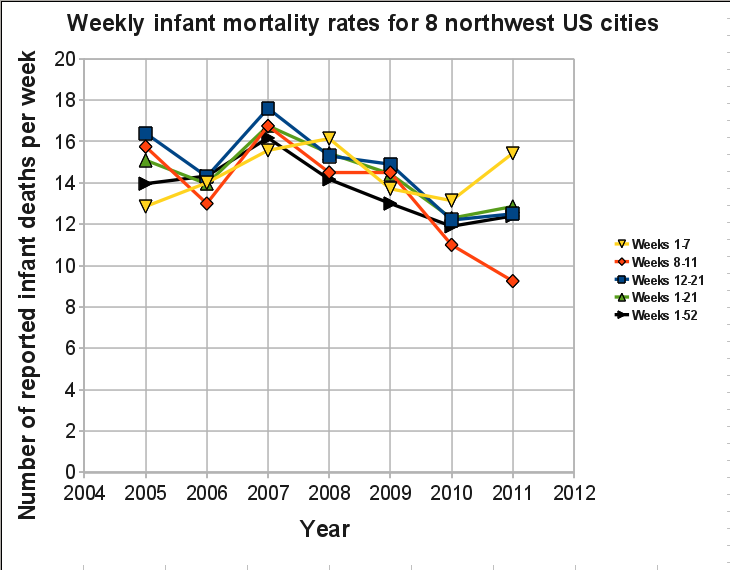

Still not convinced? Let us include weeks 1-7, weeks 1-21, and weeks 1-52 for each year. Please note that the value for 2011 in the weeks 1-52 (black line) only include the data for weeks 1-25, so it can change somewhat depending on the number of infant deaths that will occur during weeks 26-52 (S&M would probably be able to predict the future, I will not make any such attempts).

If anybody wants to pursue the idea that there is a drastic increase of infant deaths in northwest U.S. after Fukushima, that person will have many things to explain, for instance the drastic increase in infant mortality this year for weeks 1-7. The data does not support the idea of increased infant mortality due to Fukushima, no matter what S&M say.

One more quote from the article by S&M:

We acknowledge that many factors can cause infant deaths, but the critics who ignore Japanese fallout as possible contributing factors are acting irresponsibly.

So, cheating with the CDC data is a more responsible way to act? Nobody is ignoring the fallout. We are just fed up with false claims wrapped in an alarmistic package. Therefore I will, like many others, once again ignore everything else that is written in the S&M article; a mixture of some valid concerns that are heavily diluted with half-truths, advertisement about the Chernobyl book (edited by Sherman) and other reports (written by Mangano), scaremongering, claims about lies and cover up, and some more nonsense claims. It would be interesting to discuss the valid concerns that are addressed, but if we every time have to filter it out from a sea of myths then we would rather spend our time elsewhere.

Janette Sherman and Joseph Mangano: We do care about the consequences from Fukushima, many of them will surely be serious. Many of us are also annoyed by the lack of interest from the media to write properly about the present status. But we do not subscribe to your way of portraying it, as long as you show yourself unable to stick to the truth. Concerned citizens have nothing to learn from you. We do see the elephant in the room while you try to make it into a mammoth. May your methods and your dishonest claims suffer the same fate as this extinct species.

One final quote. In the beginning of the article there is a picture of a pretty baby, with the following figure caption:

Not only is it unthinkable to put our babies at risk by continued use of nuclear power plants, but infant mortality is an indication of an entire population’s health. When an unusual number of babies are dying, we are all at risk and must take a stand.

S&M must have plotted all the data from 2005 until now. If not, here I have done it for you:

Ok, I plot all years over each other, a bit messy. A better alternative would be to plot the years after each other, as done in this figure by Alexey Goldin in his entertaining statistics analysis of the S&M hoax. One may also try to see seasonal trends by plotting mean values for a number of years. In whatever way, S&M still owes us an explanation for their claim of an unusual number of babies that are dying. Can we close this chapter now, please?

Ad hominem/hominid attacks

I start to ask myself, are there Cliffs Notes for epidemiology? Is this how Mangano passed his courses for the MPH degree? Since my first encounter with Cliffs Notes about 20 years ago as foreign exchange student in the U.S., the company seems to have expanded its activities substantially, and are also available on the internet. At that time I was only aware of Cliffs Notes that cover novels that you are expected to read in school. If you are too lazy to read the novel, the Cliffs Notes gives a summary of the book, typical issues and questions that are likely to be on the exam (or good to be aware of if you want to pretend that you read the book and do not want to get expelled from the book-reading club), and a few other short cuts for the illiterate who still wants to graduate from high school. I find no Cliffs Notes for epidemiology, but there are indeed Cliffs Notes on statistics! Well Joe, if this is how you made it through college, please go back and read the following part:

It is important to realize that statistical significance and substantive, or practical, significance are not the same thing. A small, but important, real-world difference may fail to reach significance in a statistical test. Conversely, a statistically significant finding may have no practical consequence.

In my first post on this subject the title was Shame on you, S&M! I should probably reconsider this title. If they had done this wilfully then I would stand my ground, but after reading their last article I get more and more convinced that it is just incompetence. They truly believe in what they are doing, and because they are victims of the Dunning-Kruger effect there is no way to make them understand that somewhere along the road they lost contact with reality. And just like Helen Caldicott, who in her debate with George Monbiot said “doctors can’t lie”, they are convinced that they speak from a higher moral ground.

So to tell somebody to be ashamed because they are incompetent in their field is about as useful as telling my 3-year old daughter to be ashamed for not knowing Bulgarian grammar. The difference is that my daughter may have a good chance to pick it up in a couple of years, if she would like to. For S&M, who have claimed expertise in their field for a long time, I see no hope at all. Maybe, just maybe, if they read Fooled by randomness by Nassim Nicholas Taleb, or some similar literature. They could really pick up some good lessons from a few chapters there. But it wouldn’t work, as far as I know Taleb’s books are not available in a Cliffs Notes format.

/Mattias Lantz – member of the independent network Nuclear Power Yes Please

Further comments

In the first post about S&M (here) I was criticized by a commenter for stating that Mangano has a track record of not handling data in an honest way, but I had not given any reference or link to back up this statement. That has been corrected and I have put two links in that post. But from now on it will be much easier. Three nonsense articles by Joe Mangano in slightly over two weeks, all three based on cherry picking. That is a track record as good (hrm, bad…) as any. To make it worse, good ol’ Joe has proudly put them on the RPHP web page:

http://www.radiation.org/press/pressrelease110607PacificNWdata.html

http://www.radiation.org/press/pressarticle110610CounterPunch.html

http://www.radiation.org/press/pressrelease110607PacificNWReport.html

http://www.radiation.org/press/pressrelease110603PhiladelphiaResults.html

And still no reaction from CounterPunch regarding their strange analysis

I have written twice to Alexander Cockburn, but have received no response. Instead there are a number of new articles that are critical of nuclear power of all kinds. Fine with me, but the lack of interest to get the strange re-analysis by Pierre Sprey corrected makes me wonder about the statement “Ours is muckraking with a radical attitude” . CounterPunch is certainly full of articles with a lot of attitude, but the muckraking seems to be missing.

Earlier blog entries about S&M

17 June 2011: http://nuclearpoweryesplease.org/blog/2011/06/17/shame-on-you-janette-sherman-and-joseph-mangano/

19 June 2011: http://nuclearpoweryesplease.org/blog/2011/06/19/more-bullshit-from-joseph-mangano-take-2/

Warning: Declaration of Social_Walker_Comment::start_lvl(&$output, $depth, $args) should be compatible with Walker_Comment::start_lvl(&$output, $depth = 0, $args = Array) in /var/www/nuclearpoweryesplease.org/public_html/blog/wp-content/plugins/social/lib/social/walker/comment.php on line 18

Warning: Declaration of Social_Walker_Comment::end_lvl(&$output, $depth, $args) should be compatible with Walker_Comment::end_lvl(&$output, $depth = 0, $args = Array) in /var/www/nuclearpoweryesplease.org/public_html/blog/wp-content/plugins/social/lib/social/walker/comment.php on line 42

Check mate?

Good work!

In my megalomaniacal opinion, you should do the following:

1) Ask some rude friends to find fault in your posts and reasoning.

2) Get a professional from a analytically literate field (not nuclear though) to criticise your post

3) If needed, post a new post with corrections in your reasoning

4) Get a professional “ally” to support you. 2 people is psychologically speaking, much more than 1 person.

5) Get CounterPunch to post a reply. From what I have read, they for sure need to. You might be reasoning faulty, but i haven´t found out where.

6) Keep speaking truth to power.

Hi Kitty, and thanks for your megalomaniacal opinion. Here is a late response to your suggestions.

1) Ask some rude friends to find fault in your posts and reasoning.

Done. For several of the blog entries I asked other members of NPYP to double check the numbers and also to find any logical faults in my reasoning.

Get a professional from a analytically literate field (not nuclear though) to criticise your post

Hm, if S&M were doing science it would be worthwile. In the present case they did not even try to publish it as a scientific article, and they would hopefully not get far if they had tried.

If needed, post a new post with corrections in your reasoning

Done, I have updated some entries due to some criticism.

4) Get a professional “ally” to support you. 2 people is psychologically speaking, much more than 1 person.

Not quite sure what you mean. If I need moral support then I will ask for it. In any case, I have had good support in the commentary fields from other people, not the least from several NPYP members.

5) Get CounterPunch to post a reply. From what I have read, they for sure need to. You might be reasoning faulty, but i haven´t found out where.

I have emailed Alexander Cockburn twice, asking for an explanation regarding the Pierre Sprey study, but not a single bleep from him. Instead they have put Sprey to extend his already faulty study to more cities in the US. In this case they do not want anybody to question it without paying for it, so I will leave it at that. You can find their teaser here, below “Our latest newsletter”: http://www.counterpunch.org/2011/08/12/riots-and-the-underclass

6) Keep speaking truth to power.

Could be a controversial phrase to use considering where Counterpunch places themselves on the political scale. 😉